Can You Ask Child Services for Tie to Review a Safety Plan Before Signing It

Apple backs off controversial child-prophylactic plans

The company now plans to have a few months more to collect input and make improvements before releasing the features, which drew fire from privacy advocates.

In a surprise Friday annunciation, Apple said it will have more time to amend its controversial kid rubber tools before it introduces them.

More feedback sought

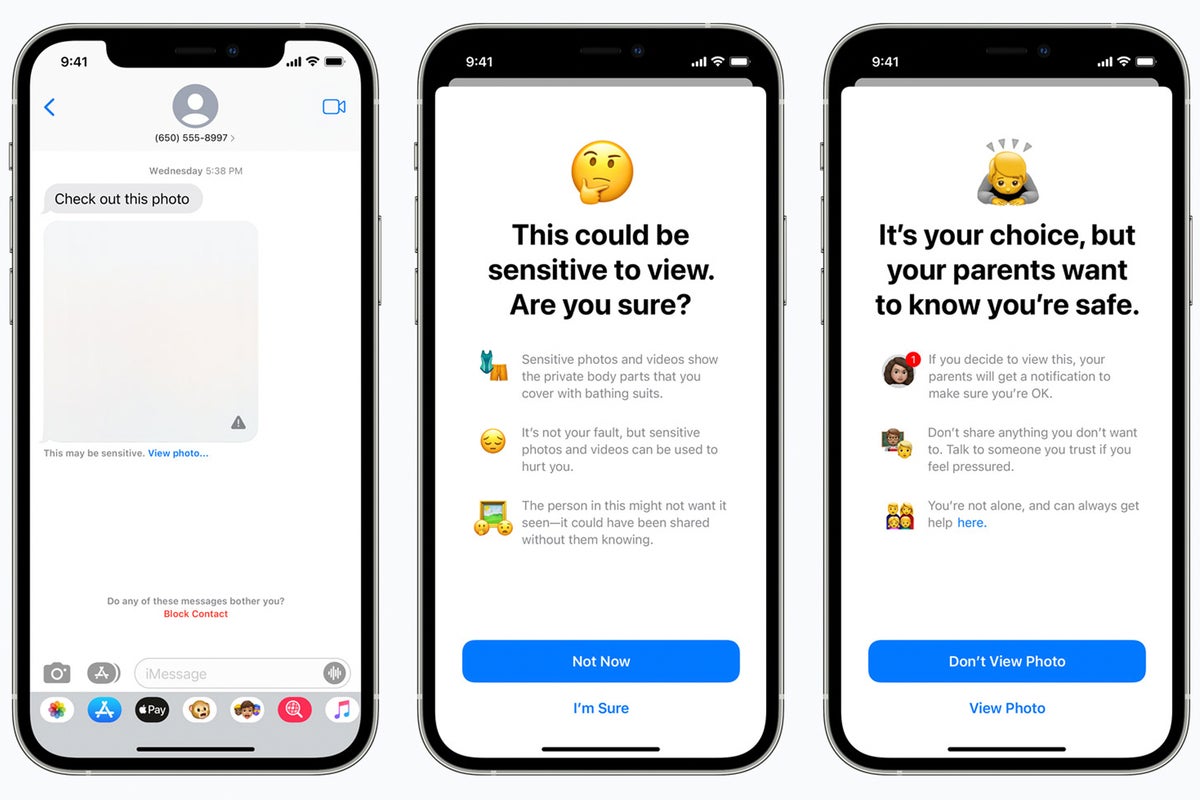

The visitor says it plans to get more feedback and improve the system, which had three key components: iCloud photos scanning for CSAM material, on-device message scanning to protect kids, and search suggestions designed to protect children.

Ever since Apple announced the tools, it has faced a barrage of criticism from concerned individuals and rights groups from across the earth. The big statement the company seemed to have a problem addressing seems to have been the potential for repressive governments to strength Apple tree to monitor for more than than CSAM.

Who watches the watchmen?

Edward Snowden, accused of leaking US intelligence and now a privacy advocate, warned on Twitter, "Make no mistake: if they tin can scan for kiddie porn today, they can scan for anything tomorrow."

Critics said these tools could be exploited or extended to support censorship of ideas or otherwise threaten free idea. Apple tree's response — that it would not extend the system — was seen every bit a little naïve.

"We have faced demands to build and deploy government-mandated changes that degrade the privacy of users before and accept steadfastly refused those demands. Nosotros will continue to refuse them in the future. Let us be clear, this engineering science is limited to detecting CSAM stored in iCloud and we volition not accede to any authorities's request to expand it," the visitor said.

"All it would take to widen the narrow backstairs that Apple is building is an expansion of the motorcar learning parameters to expect for additional types of content," countered the Electronic Frontier Foundation.

Apple listens to its users (in a practiced manner)

In a statement widely released to the media (on the Friday before a US holiday, when bad news is sometimes released) nigh the suspension, Apple said:

"Based on feedback from customers, advocacy groups, researchers and others, we have decided to take boosted time over the coming months to collect input and make improvements before releasing these critically important child safety features."

Information technology'southward a move the company had to accept. In mid-August, more than than 90 NGOs contacted the visitor in an open up alphabetic character begging that it reconsider. That letter was signed by Liberty, Big Blood brother Lookout. ACLU, Heart for Democracy & Technology, Centre for Gratuitous Expression, EFF, ISOC, Privacy International, and many more.

The devil in the details

The organizations warned of several weaknesses in the company'south proposals. Ane that very much cutting through: that the system itself may be driveling by calumniating adults.

"LGBTQ+ youths on family accounts with unsympathetic parents are particularly at risk," they wrote. "Every bit a result of this change, iMessages will no longer provide confidentiality and privacy to those users."

Business concern that Apple'due south proposed system could be extended also remain. Sharon Bradford Franklin, co-managing director of the CDT Security & Surveillance Project, warned that governments "will demand that Apple tree scan for and block images of man rights abuses, political protests, and other content that should be protected as gratuitous expression, which forms the backbone of a free and democratic gild."

Apple's defenders said what Apple had been trying to attain was to maintain overall privacy on user data while creating a system that could pick up only illegal content. They also pointed to the various failsafes the company congenital into its system.

Those arguments did not work, and Apple execs surely picked up on the aforementioned kind of social media feedback I saw, which represented deep distrust in the proposals.

What happens next?

Apple's statement didn't say. But given the visitor has spent weeks since the announcement coming together with media and concerned groups from beyond all its markets on this matter, information technology seems logical that the second iteration of its kid protection tools may address some of the concerns.

Delight follow me on Twitter, or bring together me in the AppleHolic'due south bar & grill and Apple Discussions groups on MeWe.

Copyright © 2021 IDG Communications, Inc.

Source: https://blogs.computerworld.com/article/3632273/apple-backs-off-controversial-child-safety-plans.html

0 Response to "Can You Ask Child Services for Tie to Review a Safety Plan Before Signing It"

Post a Comment